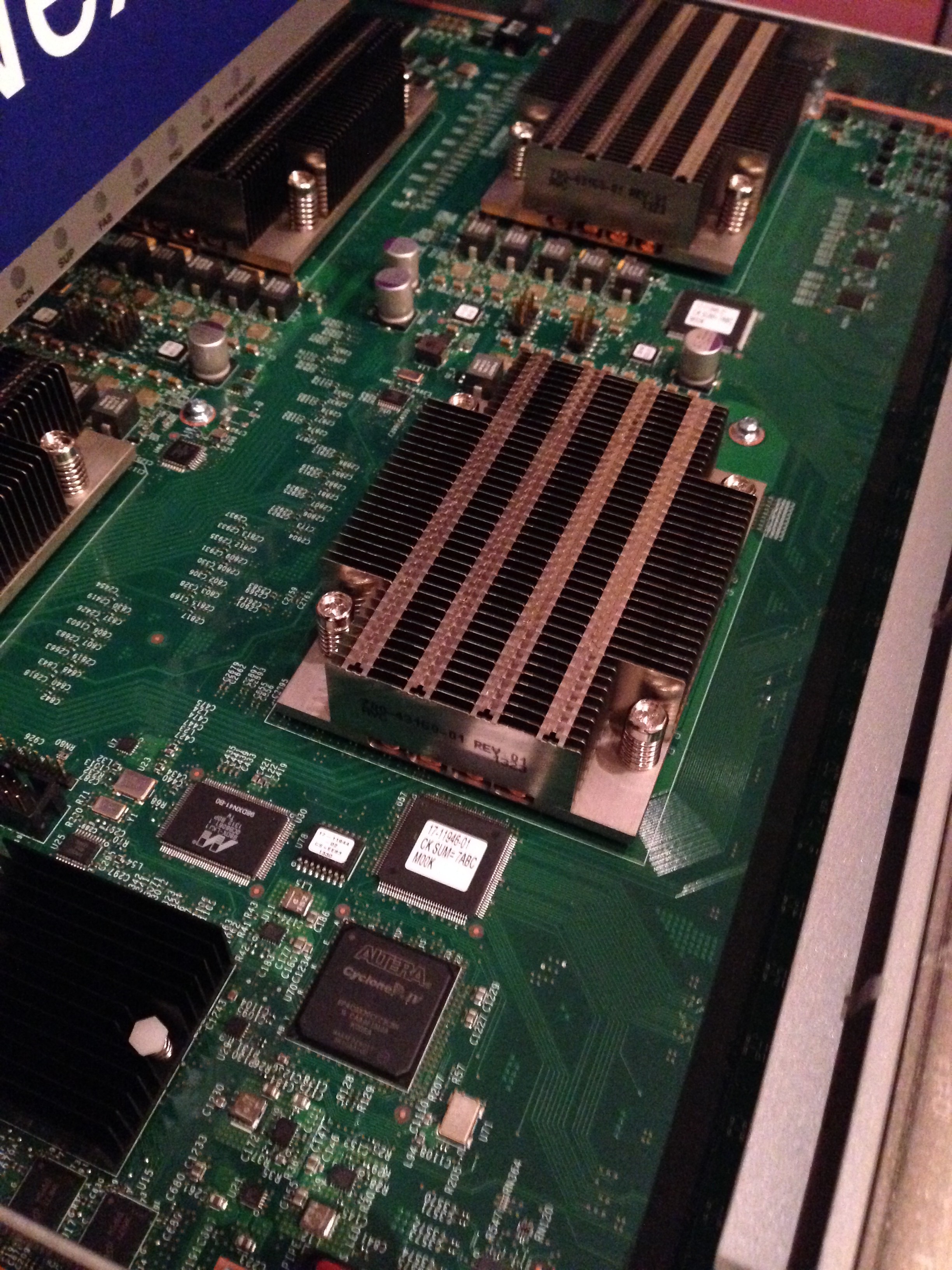

At the Cisco Live 2013 even in London this January, Cisco gave attendees a sneak peak of the about-to-be-announced Network Analysis Module (NAM) for, amazingly enough, the Nexus 7000 series switches. In fact, it is the first – and currently only – service module for the Nexus 7k.

At the Cisco Live 2013 even in London this January, Cisco gave attendees a sneak peak of the about-to-be-announced Network Analysis Module (NAM) for, amazingly enough, the Nexus 7000 series switches. In fact, it is the first – and currently only – service module for the Nexus 7k.

So now that the NAM has been officially announced, I wanted to take a quick look at the product itself, and maybe look at what it may herald for the Nexus 7k lineup.

Nexus Service Modules

Let’s rewind the clock a few years. The Nexus 7000 was first presented to me by Cisco while I was working for a client with a huge existing installed base of 7600 switches (“routers” if you prefer). Naturally one of our first concerns was the migration path for our service modules (NAM, ACE and CSM for example). The answer we were given was pretty straightforward – there aren’t any service modules for Nexus, and none are planned. Huh? So if I want to move to the Nexus 7k platform but continue to have, say, ACE load balancing functionality* in the network, I either have to migrate to ACE appliances, or I have to continue purchasing 7600 chassis in order to support the existing service modules.

* I am going to avoid the argument about how good that functionality actually was.

But that was the deal: basically the Nexus was a fast, high capacity packet mover, and was not likely to get service modules any time soon. On one level, it made sense – each slot on the first generation Nexus 7k supported a theoretical 230Gbps of throughput (assuming that you had five Fabric-1 modules installed). Typical 6500/7600 service modules have pretty meager capacities. For example:

- Service and Application Module (SAMI) => 16Gbps

- Network Analysis Module (NAM-3 on 6500-E only) => 15Gbps

- Application Content Engine (ACE) => 10GBps

- Firewall Service Module => 6Gbps

- Content Services Module (CSM) => 4Gbps

- Network Analysis Module (NAM-2) => 1Gbps

There is an argument that there would be little point migrating the existing modules to a new form factor. After all, would you, as a user, really blow out an entire 230Gbps slot with a 10GBps Nexus ACE module, and waste 220Gbps? Does that really justify taking up one of your precious slots? Well, actually it might – depending on what’s important to you – but it does feel kind of wrong, doesn’t it?

I actually wondered whether we might see some kind of Service Module Carrier blade that could accept multiple service modules within it (similar to the 7600’s SIP/SSC). Sadly I also suspect it just wouldn’t be possible to get enough components onto a small sub-module (let alone the potential thermal issues of packing that much CPU in a single slot).

And So, the NAM-NX1

On the 6500/7600 platform there is up to 40Gbps available per slot, and the NAM module (WS-SVC-NAM-2) as mentioned above can handle a whopping 1Gbps of traffic. I’d wager that anybody who has used that NAM has discovered that 1Gbps is just a little on the ‘underspecified’ side for a chassis supporting 10Gbps connectivity. Worse, anybody who has tried to run a ‘both’ way (rx and tx) span to a NAM-2 blade that amounted to more than 1Gbps of traffic will likely have discovered that the resulting bottleneck of traffic going to the NAM module was enough to stall the backplane. And hilarity does not ensue.

So with that in mind, Cisco have announced a NAM service module for the Nexus 7000, a chassis for which 10Gbps is the bread and butter port speed. Given the 230Gbps per slot capacity even for the first gen fabric cards I think we can expect significant throughput to be present on the chassis, so I assume that the new NAM-NX1 must have seriously high bandwidth capabilties. Checking the data sheet reveals … nothing. In fact the only reference I could find to the performance was this:

- Two x86 CPU clusters, with a total of four 8-core CPUs and hardware-based packet acceleration, offering high-performance Gigabit Ethernet monitoring performance

I’m not sure what “high-performance Gigabit Ethernet monitoring performance” means, but that sounds awfully like it can deal with only Gigabit data speeds. Multi-gigabit? Well, it’s unclear; I’ll make inquiries and let you know. But am I wrong to hope for the ability to capture at least, say, 15Gbps to match the NAM-3 (which runs on an arguably lesser platform)? I’m sure the omission of this performance figure is something that will be rectified soon.

And is the NAM’s backplane connectivity non-blocking? Well, we assume so – as that’s a fundamental selling point of the Nexus backplane. Since the arbiters won’t let traffic on to the fabric unless there’s space for it to exit, the problem – if any – would presumably move to the input queues. I’ll be interested to see if this becomes a problem if there’s a significant oversubscription between SPAN source and the NAM destination.

Who Wants a NAM Anyway?

I’m always torn about NAMs. They blow out a slot that I’ve so often needed for something more important – you know, like, actual network ports. On the other hand, they’re so easy to provision and start capturing traffic that it’s an easy sell in many environments. Without a NAM, you likely end up putting in taps on uplinks or on other key links, and then that usually means you need a layer 1 switch of some sort in order to dynamically feed the appropriate data to your dedicated capture appliance – assuming, that is, you don’t just give up and wheel a cart around the data center and connect it up as needed. I’ve been using NAMs or some sort since the Catalyst 5000 NAM (which as I recall was basically a 4-port FastEthernet NetScout probe on a blade). It seems that I’ve been unable to escape them since then, with NAM-1 and NAM-2 on the 6500/7600 series switches in pretty much every client I’ve worked for. On that basis, I strongly suspect I’ll be seeing them deployed in the Nexus 7k as well. They’re supposed to be available some time in the first half of 2013, so watch this space! Hopefully between now and then the published specifications will be firmed up a little bit.

NAM-NX1 and vNAM

As part of a broader picture, the NAM-NX1 is being positioned – along with the new Virtual NAM (vNAM) – as a solution that allows you to monitor traffic flows end-to-end, whether in the data center or in the cloud. The vNAM is interesting – it’s a virtual appliance that can be installed on ESXi, Hyper-V or KVM platforms, and it supports analysis (via license) of up to 1Gbps of traffic. Cisco is very clear that if you need more than 1Gbps, you need to look to one of the other NAM products. Still, as an appliance, it would be fairly simple to deploy multiple vNAM instances remotely (for example) where local bandwidth requirements may be lower. The vNAM is also scheduled to be available some time in the first half of 2013.

Both the NAM module and the vNAM offer Layer 2-7 deep packet inspection, including being able to look at data transported inside other protocols like OTV, Fabric Path and VXLAN. You can feed data to them both via SPAN, RSPAN, ERSPAN, VACL and so on. The vNAM sounds like it has a lot of potential, perhaps more than the NAM-NX1; but that may be the slot conservationist in me speaking. For some reason, blowing out server resources on a virtual appliance gives me less of a problem than occupying an entire slot with a NAM.

Nexus Service Modules – The Future?

When I heard the announcement about the NAM-NX1 module, I realized that the thing that interested me the most was not the NAM itself; it was the fact that somebody had green lit a service module on the Nexus 7000 series. And I hear that this is just the first service module – there will be others showing up in the future. Given the mothballed state of the ACE, I think we can safely assume that other service modules will be filling this space. Will we be seeing the SAMI, or other more powerful “CPU on a blade” modules? WAN modules? I heard a couple of years back that the ASA had been ported to a Nexus blade as one of the internal “Science Fair” projects, but that it had not been accepted as a product to actively develop. Maybe that has changed and we’ll see a new, high-capacity security product (presumably ASA based) on a Nexus blade? Coupling a very high throughput firewall with the capacity of the Nexus 7k chassis could finally give Cisco a security product with which to seriously rival Juniper’s SRX.

So what service modules would you like to see for the Nexus 7k?

I’ve yet to see a service module that I would chose over a separate appliance.

CSM – Disgusting

CMM – Nearly ruined Cisco’s telephony solution (probably)

FWSM – Whacky, mandated, configurations that make no sense for all

WiSM – you dont like to use the 6500’s features anyway

ASA-M – Hi I’m brand new. I require a 6500 to work in your Nexus DC

IPSEC Module – When your vendor doesnt even recommend this you know it is bad

NAM – Was awful – hard to provision unless you ordered for every single switch. Made rely on RSPAN . Had no decent analysis and its netflow modules were silly at best. It’s tiny 20MB, seemingly, hard drive allowed for ~20ms captures. In many networks these are just ‘there’ unconfigured and replaced with alternate solution.

Nexus 7000 – This NAM changes things a bit. They hearld that it is the easiest solution to prevent packet loss in your captures as it’s built into the back plane. They also allow for FCP direct to your SAN for huge amounts of capture.

This is all great, but if it does not provide awesome analysis like ARX or infinistream, then it is probably a waste of money. I’m looking forward to see what the ‘real’ capabilities are as far as traffic analysis go, if any.

CSM – even if you hate the product, it was better than the standalone CSS (mostly). Let’s not go there with the CSM-S versus adding an SCA for SSL offload. Let’s not talk too much about the ACE; I can’t afford the therapy.

The IPSec SPA didn’t do much, but the places I installed it, the extra encryption throughput was very handy; I didn’t have any problems with it.

FWSM – yeah, well, that was special.

The NAM’s biggest problem in my opinion was the 1GB bandwidth limit for captures. The NAM2-250S had a 250Gb SATA drive in it (not sure if they ever recognized more than 125Gb mind you), so the 20GB criticism isn’t really fair to the later versions. I agree though, you need them in every switch to be useful. Whether that’s a problem may depend on how many 6500s you had (we had a LOT), and whether you actually needed the slot to provide ports instead (we did). Capacities kept on scaling for the ports, but the NAM was left way behind and became more useless as time went on. The NAM-3 was amazingly late to market, and by the time it arrived most of us had moved on to other solutions.

I get the impression that with the N7K NAM, Cisco is trying to get back into that market. I really want to see more thorough specs though, to know that they’re serious about what they’re putting in a 7000 chassis.