Teridion claims to bring cloud optimized routing to dynamic content delivery.

The home page continues We go beyond traditional CDN and WAN optimization combining the best of SDN and NFV to generate a better QoS and QoE for customers of cloud-based content , application, and service providers.

Got that? Perhaps it’s not the most succinct elevator pitch, but Teridion’s concept is at the very least interesting, and as a thought exercise it’s a fascinating look at how the Internet both enables us, yet fails us in so many ways. Even if the product is not for you, the problem Teridion claims to solve is an good thought exercise in and of itself, and it brings to the forefront the reliance we place on the internet despite the fact that we have no control over how our traffic traverses it.

Perhaps Morpheus is being slightly misleading in the image above, but otherwise the statement is pretty much true, although this isn’t a product intended for purchase by home users, for example. At its core, Teridion’s product concept is actually fairly simple. The Internet is used as a conduit to move data between locations around the world because it’s significantly more cost effective than purchasing private circuits. Software Defined WAN (SDWAN), for example, sells itself in part on the promise that Internet bandwidth can be used to augment or replace private circuits at a lower cost. But using the Internet for data transit puts the user at the mercy of the varying transit policies of every service provider between the source and destination. Even if the source and destination are hosted by the same service provider, the customer has no control over the internal routing of that service provider. Worse, while BGP is an incredibly flexible protocol, it’s also a very blunt tool in some ways and in addition to being a path vector protocol (which means its finest granularity is a BGP Autonomous System), it’s also at the mercy of service provider business and peering policies overriding what common sense might indicate would be the best path to a destination. In terms of nuanced dynamic path control based on latency, load, and loss, using BGP to optimize Internet routing can be like trying to cut butter with a hammer.

Teridion

(engages Morpheus voice) But what if you could put devices at a large number of locations on the Internet and measure the performance between all of them? And what if you could then calculate your own best paths between locations, whose decisions are made based on best performance; effectively an overlay routing protocol? And then what if you could use that knowledge to tunnel data from location to location over these performance-optimized paths in order to force the data over a particular path rather than leaving it at the mercy of the service providers’ decisions? That’s a lot of what ifs

, but Teridion claims to have gone beyond what if

and moved to So, how much data did you want to send?

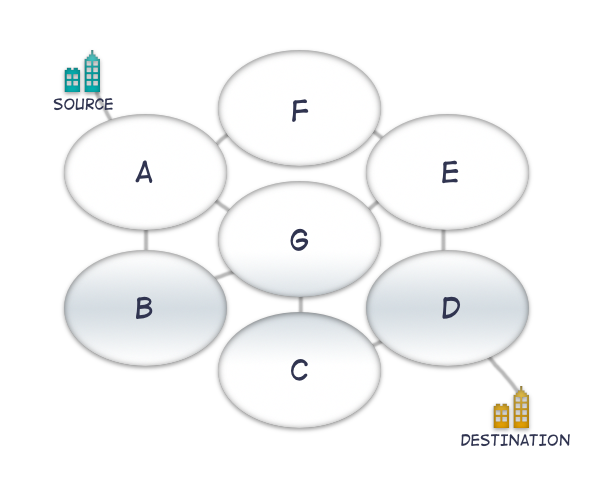

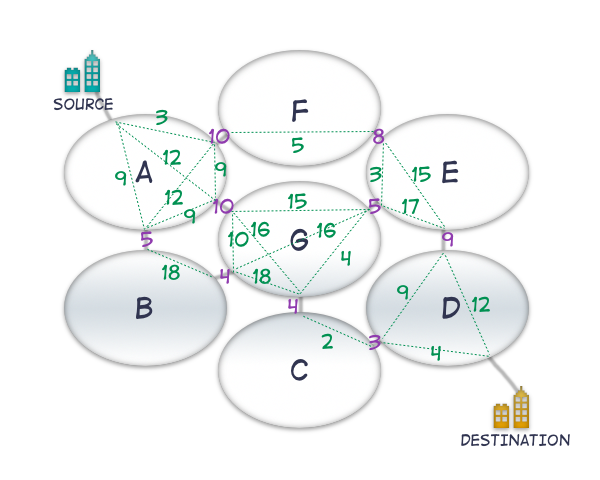

To look at this graphically, imagine a partial mesh of internet service providers looking like this, where each oval represents one BGP Autonomous System (AS), with grey lines indicating peering relationships:

To move traffic from the source to the destination, two paths seem like the best choices based on number of hops: A>G>C>D and A>F>E>D are four hops, versus A>B>G>C>D and A>B>G>E>D which are five hops:

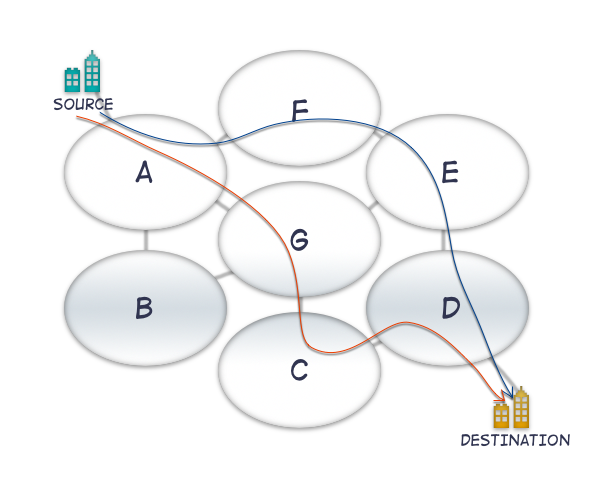

If all peering links are of equal bandwidth and latency, and if transit times and bandwidth through each service provider in this example are also equal, it would seem logical that using a simple metric like the AS Path would provide a shortest path to the destination. Unfortunately, these suppositions do not sound very much like the actual characteristics of the Internet. Peering links are of varying bandwidth; physical (geographical) routing of paths and the number of hops along the way may mean that latencies between any given two locations may vary between providers. Similarly, the utilization of any given path can change at any time, causing queueing and thus additional latency and maybe even packet loss, the latter of which may mean retransmissions, which means an effective slow-down of data transfers. BGP largely takes into account none of the above, notwithstanding any traffic engineering being performed within a provider’s network. The end result is that the supposedly obvious path between two points in our example maybe not actually be the best path over which to send traffic.

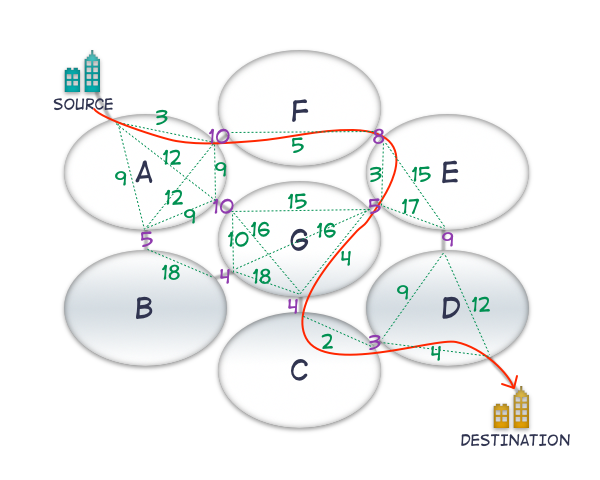

The diagram below shows some link latencies added to the peering links. If we assume that within each AS the latency remains the same, how does our optimal routing look based on latency?

The path A>B>G>C>D begins to look like the most attractive, but it’s unlikely to be chosen by BGP. Our calculation doesn’t take into account the latency within each AS however, so the diagram below has latencies figures between each peering point added as well:

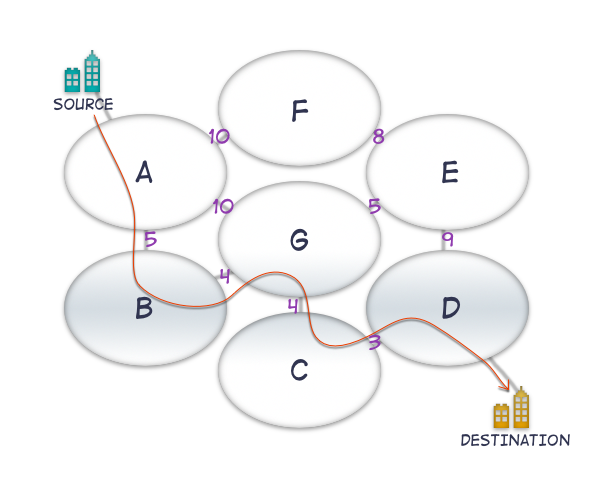

The calculations are getting a little more cumbersome now, so I will reveal (with drum roll) that the lowest cost path in this case is in fact A>F>E>G>C>D:

Somehow the lowest cost path now involves six service providers, compared to the original, more intuitive, four service providers. Other factors could be considered as well, like packet loss, a determination of bandwidth, jitter, and so forth. It should be clear that the calculation can not only get very complex, depending on which factors are evaluated, but might also result in an desirable path that is not the way that BGP would take you by default. This is the problem that Teridion is solving; the product offers a way to manipulate the path that traffic takes across the Internet. Service providers won’t accept source-routing by customers, so the real question is how Teridion achieves this magic.

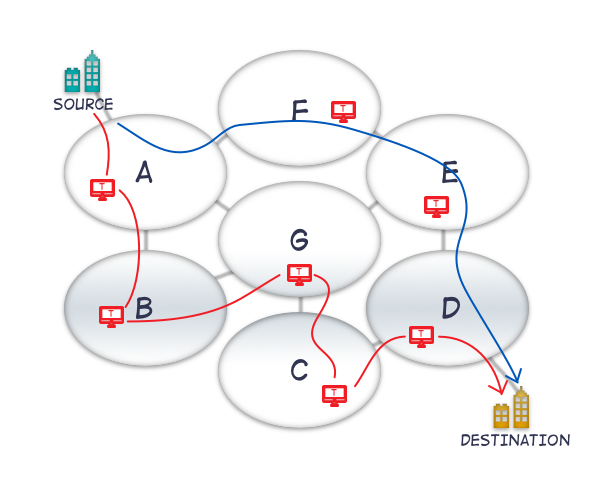

It’s All In The Cloud

Teridion, as I understand it, has servers deployed in a number of locations, with a number of cloud providers which peer into many service providers. The servers run various tests between one another in order to estimate the Internet’s performance over those paths, and from that data it’s possible to create an overlay which represents the optimal paths between each server location. By having servers using address space within particular service providers, it’s possible for example, to send traffic between two adjacent service providers and persuade the ‘next hop’ to go a particular way, even if transit routing of data to the customer’s final destination might be sent over another path by the ingress provider. Teridion customers can send traffic to the Teridion Global Cloud Network (GCN) overlay so that it can make its way to the destination by being tunneled across the optimal path that was calculated by the servers.

In the diagram above, the blue path is an example of the default path without optimization, and the red lines show the optimized path through Teridion’s overlay network. It seems implicit that the overlay network can scale (within reason), as nodes within cloud providers make it fairly easy to add more x86 servers as needed. It’s worth adding that it’s possible to select regions which should be avoided (e.g. for compliance reasons) and the overlay will not route through those.

And So What?

The question is, while this is all very cool, does it actually make any difference by the time the data has been tunneled and pushed through an overlay? Teridion says yes.

Actually Teridion doesn’t just say yes

, it says it offers throughput that is 5 to 20 times more than current internet speeds. Yes, that was five to twenty times more throughput. Most of this appears to be achieved being able to achieve predictable lower latencies; that is, with reduced jitter. It kind of sounds like magic, doesn’t it?

Who cares about this kind of improvement? Content Delivery Networks (CDN) do, for one. Software As A Service (SaaS) companies could benefit similarly, by improving performance and thus their users’ experience. Enabling Teridion requires a CNAME change to point to a Teridion node or nodes instead of the final endpoint, and that’s enough to allow the Teridion GCN to grab the traffic and optimize the transfer.

Let The Cynicism Begin?

First things first: this is definitely not the first time this has been attempted; I know of at least one company who tried the exact same thing for their own purposes. It may, however, be the first time it has been productized for use outside a private organization.

It also just sounds too good to be true. I can see the logic in the product, but even with that knowledge, 5-20 times more throughput sounds, well, unbelievable. Initially, I was highly suspicious of the Teridion product and the amazing promises made about it. Part of that concern came because when I and others at the Networking Field Day session asked some probing questions about how exactly this worked, some answers given were vague, or pointed towards some kind of magic proprietary unicorn dust. I’m always a bit twitchy about trusting magic, because so much of it is sleight of hand. However, I’m also a sucker for a good client testimonial, and the fact that Teridion’s client list includes Box for example, suggests that perhaps there’s more to this than smoke and mirrors.

If nothing else, Teridion’s proposition should make us wonder about how bad our internet service actually is; not because of our service providers but because of the way the internet works — or doesn’t, as the case may be. I wonder how incredibly fast the internet could be if it ran the same kind of real-time performance-based protocols used by Teridion? Of course, there’s the potential rub: if everybody were to use the optimal path between two points, what’s to stop that path becoming saturated and thus no longer being a very optimal path? This is an issue I’m sure Teridion has to take into account. Until that actually manifests as a problem, however, it sounds like Teridion’s customers may be getting an express lane for their data transfers on a much more cost efficient basis than if they were to use private links.

What do you think? Does this sound amazing or insane?

Disclosure

I was an invited delegate to Network Field Day 12, at which Teridion presented. Sponsors pay to present to NFD delegates, and in turn that money funds my transport, accommodation and nutritional needs while I am there. That said, I don’t get paid anything to be there, and I’m under no obligation to write so much as a single tweet about any of the presenting companies, let alone write anything nice about them. If I have written about a sponsoring company, you can rest assured that I’m saying what I want to say and I’m writing it because I want to, not because I have to.

You can read more here if you would like.

Hey John, one question I have for you is just exactly how is the reverse path optimized? I mean the forward path seems pretty straight forward, but coaxing the first-hop router of the web server to chart a path back through the Teridion GCN to reach the client seems a bit more tricky.

In my limited understanding, I can only assume they must be toying with BGP (e.g. anycast BGP) to advertize more attractive routes to DFZ Internet routers in order to lure traffic into the GCN for specialized handling. Assuming that’s the case, one wonders how DNSSEC and BGPSEC might foil such plans? Although to be fair given the current rate of progress, it’s a safe bet that Teridion would be in no immediate danger from either of these any time in the foreseeable future 🙂

Last, to address your concerns concerning performance and congestion, I think they’re counting on the horizontal auto-scaling capabilities of the virtual routers to be able to spin additional resources up and down as needed, or at least I’m sure that would be the ideal. Presumably the control plane would scale in direct proportion as well without negative performance impact, or possibly even improved efficiency.

it’s real, we have been using Teridion since the summer and it’s a key part of our production infrastructure for the Upthere Cloud Storage service.

If I am right they do TCP acceleration as well