Today Big Switch is formally announcing availability of their new “Big Cloud Fabric”, touted as “the industry’s first bare metal SDN data center switching fabric.” And that means what? Well, if you like the idea behind the Ethernet fabric solutions out there right now – Cisco’s ACI and FabricPath, Avaya’s Fabric Connect, and Juniper’s QFabric to name but a few – but you don’t like the idea of being tied in to a single vendor’s hardware solution, then this might be just the thing you’re looking for.

Put simply, Big Switch’s proposition is that you can buy your own ‘white box’ switches (from those detailed on the Big Switch Hardware Compatibility List), run the Switch Light OS on those switches, add the Big Cloud Fabric controllers, and violin, you have your very own Ethernet fabric!

Big Switch

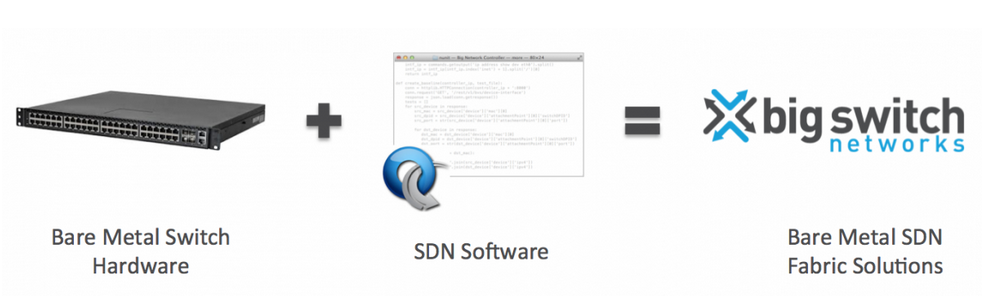

Big Switch have been around for a few years now. Their proposition has been relatively simple, though arguably it requires a bit of a confidence leap to commit your company’s network to bare metal switches running a third-party OS. The concept has always remained simple, and their own site puts it best:

There are a number of companies making these “white box” or “bare metal” switches, although since some of the bigger players have been building their hyperscale data centers using particular white box vendors, the economies of scale (and thus lower pricing) suggest that you’ll most likely end up buying something from Quanta or Accton. The point is, you get to choose what you want to deploy (Hardware Compatibility List notwithstanding), and since the solutions in use are based on the almost ubiquitous Broadcom Trident II chipset, capabilities are pretty consistent across the board. In fact, that equivalency is arguably both a blessing and a curse for white box manufacturers as it must be difficult to differentiate one product from another. The ‘big vendors’ may use the same chipset as the white box vendors, but they position their software as the big differentiator.

There are a number of companies making these “white box” or “bare metal” switches, although since some of the bigger players have been building their hyperscale data centers using particular white box vendors, the economies of scale (and thus lower pricing) suggest that you’ll most likely end up buying something from Quanta or Accton. The point is, you get to choose what you want to deploy (Hardware Compatibility List notwithstanding), and since the solutions in use are based on the almost ubiquitous Broadcom Trident II chipset, capabilities are pretty consistent across the board. In fact, that equivalency is arguably both a blessing and a curse for white box manufacturers as it must be difficult to differentiate one product from another. The ‘big vendors’ may use the same chipset as the white box vendors, but they position their software as the big differentiator.

I digress. Despite the hesitancy some may have to jump in feet first with bare metal, Big Switch chalked up their first $1M customer in the last year, and have customers across verticals including Federal Government, Financial, Service Provider and Hyperscale. So clearly some at least feel that this is a smart move to make.

Building a Big Cloud Fabric

Once you have the controllers set up, adding a switch to the Big Cloud Fabric is as simple as turning it on. Similar to a PXE boot process, a bare metal switch supporting ONIE (Open Network Install Environment) does not need to have an operating system pre-installed, but instead can bring up a management Ethernet interface and use DHCP to get an IP address and – courtesy of Option 5366 – the address of the Big Cloud Fabric controllers so that they can grab an operating system and boot up running Big Swithch’s Switch Light OS.

Once booted, the switches show up in the management GUI categorized as “Other”, because it has not yet been given a role in a standard Leaf Spine architecture. And yes, that’s basically the one supported architecture, although that’s not such a pain given what a useful architecture it is. Giving the new switch a role is as simple as dragging the switch from Other to Leaf or Spine. The controller uses the switch’s LLDP neighbor table to figure out the neighbors in the topology, and configures it for the appropriate role. The new switch, like every other switch in the fabric, is configured and managed by the controller. In other words, the whole fabric is managed like, get this, one big switch. One Big Switch. See what I did there?

Multi Tenancy

Given a typical environment with a wide range of Virtual Machine ethernet ports and Physical Machine ports, the likelihood is that services or tenants will be spread across those resources and you’ll want the network to let them talk to one another. The Big Cloud Fabric makes it pretty easy to accomplish this.

Tenants and Logical Routers

A “Tenant” is the term used to describe an application or customer (as you need) and represents a logical grouping of L2/L3 networks (perhaps a mixture of those physical and virtual ports I mentioned above). Effectively any network resources configured for a tenant are isolated from all other tenants’ resources, in the same way that a VRF isolates layer 3 information. To route traffic between subnets within a tenant, you can create a logical router which provides layer 3 interfaces (and, obviously, routing capability). If tenants need to pass IP traffic between each other, there is a logical System Router which acts like a multi-VRF router connecting all the tenants together; you can define who can talk to whom through this router. You’d expect to have a regular layer 3 core outside the fabric to connect to the rest of the world, as it were.

Distributed Routing

Where this all gets clever is that the logical routers are not just a descriptive name; they don’t exist on a particular device, but are actually pushed down to every node in the network. The System Router meanwhile exists on all the spine nodes. Resolving ARP for a gateway IP returns the same MAC address wherever you are in the network, and the first hop you hit makes the decisions and offers a full ECMP (Equal Cost Multi Path) layer2/layer 3 fabric.

Fabric Management

Managing the fabric comes down to picking your favorite interface. Under the hood the controller is pure REST API, and the CLI and GUI are really just REST API clients, so you can be confident that every command you can issue in the CLI or GUI are available for your own hard core scripting and integration needs – should you have them – via the API.

Policy Management

Policies are the equivalent of Access Control Lists (ACLs) and support both filtering and Service Insertion (aka Service Chaining). It’s relatively simple from the GUI to add policies both between tenants and between L2 segments within a tenant. For example, you might want a policy to limit traffic from an Internet ‘tenant’ so that it can only talk to a web tier on a webapp tenant on port 80 and 443, then police traffic from the web tier to the app tier within the webapp tenant. Service insertion means that you can also redirect traffic at any point to a third party device like a load balancer, proxy or firewall so that the device is “silently” inline.

Physical, Then Virtual

The first product being announced today for availability in Q3/2014 is the P-Clos solution, which is a leaf/spine fabric made up of the bare metal switches. Coming in Q4 I believe is the next logical step for the fabric which is the Unified P&V Clos which allows the same controllers to manage both the bare metal switches and Big Switch’s own Switch Light vSwitch. The network end to end can now be controlled in one place from the Hypervisor to the bare metal. This is a super cool idea and lends itself to many environments, not to mention the obvious opportunities for automation it offers.

Big Deal?

If you don’t like playing with the big vendors, this is probably a huge deal, because Big Switch is bringing to bare metal the same kind of integrated management as is being offered by the other fabrics out there, and it all works without you having to programmatically make it all work. Unless you want to, that is, in which case go API crazy! This could be very disruptive for the big player’s fabric offerings, offering a flexible entry point into a market that is turning out to be so proprietary.

Big Switch is partnering with a number of other vendors as part of their grown ecosystem, and expect to announce more detail about that soon. They’re a company who is pretty clear about where their expertise is, and right now they’re honest about saying that Layers 4–7 is an area where other people are experts and they would be much better off partnering with them if they want to provide those services, than trying to reinvent the wheel.

I can see there’s a lot more to learn about this solution, and I was excited to see that Big Switch is a sponsor for Networking Field Day 8 in September, to which I just recently received an invitation. Based on today’s announcement, we are in for a good time.

Big Deal? Yes, I’d say so.

Disclosures

I attended a short pre-release Webex briefing hosted by Big Switch. My opinions remain my own, and as usual there is no quid pro quo for this stuff.

Leave a Reply