Today, a technical challenge about the behavior of Juniper SRX firewalls. I’m curious to hear your opinions on this, but no cheating and labbing it up, because I want to hear what you think should happen (and, if different, what you think will happen).

In the next post I’ll talk about the answer.

Scenario

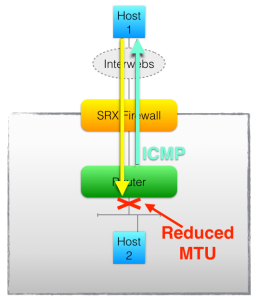

Imagine that I have a Juniper SRX high end firewall protecting my network. My DMZ is composed of multiple public networks hanging off a router behind the firewall. Internal links all use RFC1918 addressing:

Ok? So traffic coming from the Internet (Host1) to hit one of my DMZ servers (Host2) will pass through the firewall which (rules permitting) will forward traffic to the router to which the DMZ subnets are attached.

Background

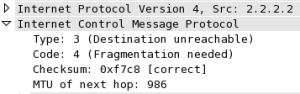

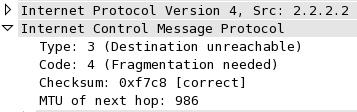

Imagine that one of the DMZ networks (for whatever reason) has a smaller than normal MTU configured on the router interface, so when traffic is sent to this server from the Internet using the usual maximum frame size, then there’s a good chance that the packet cannot be transmitted to the DMZ host (Host2), and my Router will need to generate a “Fragmentation Needed but DF-bit set” ICMP message (Type3/Code4) to let the sending host (Host1) know that there was a problem sending that particular frame onward towards the destination.

Who would choose to set the DF (Do not Fragment) bit? Well, any client running Path MTU Discovery (PMTUD), so quite a few of them in fact. Once the client receives a ICMP Type3/4 from a device along the traffic path, it can adjust the maximum packet size it will try to send to that destination to fit the available MTU; and that’s PMTUD. Without the ICMP message, traffic simply black holes (it is dropped by the Router with the MTU problem) and the sender has no idea what’s going on, and will try to retransmit as if the packet were lost. Needless to say, the retransmission is also lost.

Question

When the Router sends back an ICMP Type3/Code4 to Host1, the sender of the packet that it cannot transmit, what will the source IP of the ICMP packet be as seen when received by Host1? You may assume that the firewall is configured with a policy to NAT RFC1918 traffic outbound to the Internet.

Think carefully, and please let me know what you conclude. Results soon!

Totally prepared to exclaim, “DOH!” when I hear the correct answer, but I’m going to say the src IP of the packet when it hits host 1 will be the outside interface of the SRX (assuming that is the NAT pool being used for NAT’ing the RFC1918 address space)

My thinking is that the ICMP message from the router will come from its interface on the 192.168.1.0/29 subnet, and the SRX will NAT it.

If this by chance happens to be correct, I can see how it would be very confusing to someone looking at the ICMP response that H1 receives. i.e. it would look like the ICMP packet came from the SRX!

Thanks, Dave!

Having a source IP that isn’t the original destination IP is less confusing than it sounds – in fact it’s normal behavior for ICMP (think about it – that’s how traceroutes work). To help with that, the ICMP response includes the header from the original packet so Host1 can look in the ICMP packet and discover that the flow that this ICMP error refers to was the one it sent from Host1 to Host 2. There’s enough info there to identify the precise flow affected. So the source IP of the ICMP packet should not affect Host1’s ability to know what broke.

OK, well *I* would have been confused, at least for a bit! =-)

For my own edification, the src IP of the ICMP message would have been the outside interface of the SRX, correct?

Thanks!

By the way – no ‘Doh!’ required here; I had to lab it up to validate what happens, the results of which make for this (and the next) post!

Without the benefit of any free SRXs to lab with, and with limited knowledge of them anyhow, I’d say the router’s external interface facing the SRX would be the outbound interface for sending back to Host 1… in which case no NAT would occur and Host 1 wouldn’t see crap. But, just a guess.

Guesses are good. Sometimes, in fact, they’re all we’ve got when we’re troubleshooting a problem. Labbing something up comes later on when we have more time and less outage!

j.

Been spending far too much trying to analyze why this is happening without a box to lab it up on…. but I would guess the ICMP message wouldn’t look like it was part of the established conversational flow and firewall would simply drop it (depending on how your policies are written). Had their been a switch instead of a router and the SRX saw the message from host, I believe this would have worked.

BTW, the SRX supports TCP MSS Clamping. Basically the ICMP message would be generated from the SRX directly and avoid all the internal network messiness. (http://www.juniper.net/techpubs/en_US/junos12.2/topics/example/session-tcp-maximum-segment-size-for-srx-series-setting-cli.html)

For sure – overriding MSS at ingress would certainly help for TCP connections at least. I suppose I should be nice and write the post with the actual answer in it so we can all feel silly / educated / smug, depending on our guesses. 😉

Thanks for the comment!

Well, I am not sure, but I would have guessed that the ICMP message would have spoofed the source to match the original destination (I wouldn’t have guessed it was in the payload data), and then the SRX would have seen the packet as new coming from Host and thus possibly NATting this ICMP packet (If even allowed by outbound policy), which of course would get thrown away by the recipient when it arrived, due to no known connection with that host. Just my guess. Anxiously awaiting the answer!

–=]NSG[=-