I’ve had network monitoring systems on my mind recently as we’ve been looking to determine the right specification for a number of fiber taps and aggregation devices so that we can fulfill the needs of both the security teams (for Intrusion Detection Systems and similar) and the network team for packet captures and troubleshooting. In reality we’d probably like to be able to have a mixture of full time monitoring (so we can pull statistics, or ‘rewind’ to a recent event and see what was happening on the network) as well as on-demand packet capture so we could troubleshoot specific problems.

As networks get bigger and faster though, the task of monitoring gets progressively more challenging as the number of devices, the number of links, and the speed of links increases.

I was interested therefore when at Networking Field Day 8 this last week we had no less than three monitoring solutions presented to us, each taking a different approach. And so, in alphabetical order, here’s what was presented.

Big Switch

Let’s start off with the obvious: Big Switch clearly believe they offer a better solution than Gigamon, which they claim (as an example of existing appliances and monitoring solutions) can’t monitor everywhere, does not have operational ease, and is not cost effective:

You can judge for yourself whether or not you believe that’s true when I outline the Gigamon solution, but it’s clear that Big Switch is trying to play straight into that space, and have done so with quite some success apparently, claiming their first $1M Big Tap customer in the last year.

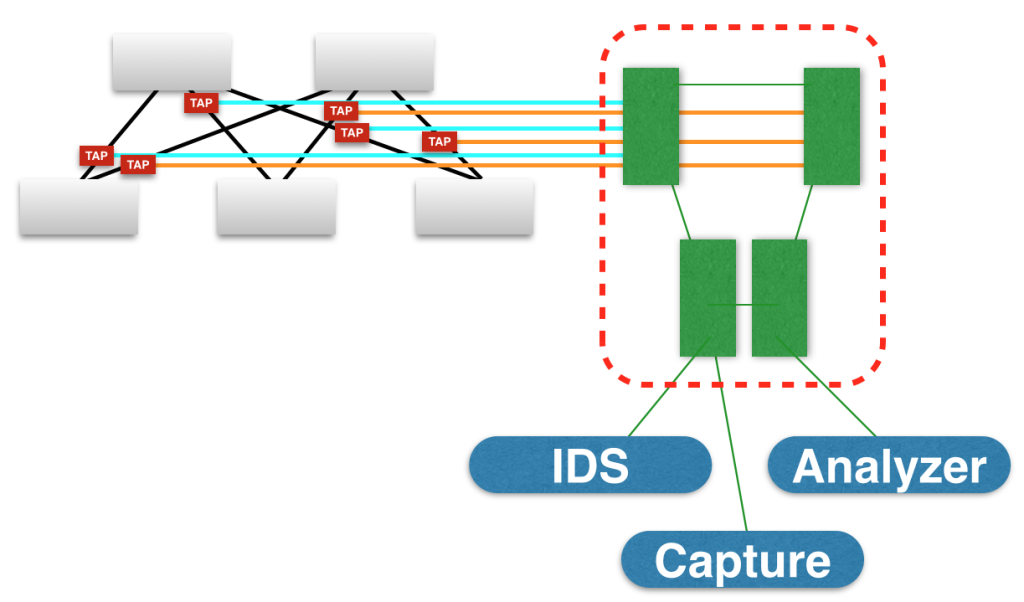

The reason behind Big Switch’s solution is that they believe customers want to be able to tap the network in every rack and, ideally, every vSwitch as well, with all the data pulled back to a central location (where your capture tools would live). While Big Switch isn’t proposing to sell you cheaper taps (I wish!), they are able to offer the opportunity to build your monitoring infrastructure using white box (bare metal) switches which are much cheaper than the “name brand” devices. The other big selling point is that the Big Tap fabric is managed by a Big Tap Fabric Controller, so you can configure it in one place rather than potentially having to log in to multiple boxes to funnel the chosen tapped traffic to the monitoring tool.

In the image above, imagine a network on the left, with many taps installed, all feeding into some kind of monitoring fabric (on the right). In order to monitor a particular flow based on source IP, say, you’d typically have to:

- figure out where the source IP is connected into the network

- figure out which tap that means the traffic will hit

- go to the monitoring switch that is connected to that tap

- configure the appropriate filter for the source IP and configure the feed upstream

- ensure that the correct upstream feed is going to your monitoring device of choice, let’s say a server that will capture the packets so you can download them when you’re ready.

That’s quite a lot of effort and Big Switch believe that they are able to make this simpler. The steps when using a Big Tap fabric are:

- Go to the controller GUI

- Locate the source IP or hostname from the list of hostnames that the fabric has already identified

- Configure a capture in the GUI using that host as a source, and determine how large you want the capture to be

- Click to download the resulting PCAP file form the controller when it’s done

All of this is done at the GUI without you having to log into any device. Big Switch also point out that this works for remote monitoring switches too – the source can be on an entirely different site, and you can still configure the monitoring session with the same ease, and let the fabric figure out how to get the captured traffic to the destination port (or to the controller). You do not of course have to use the controller for your captures; indeed if you run an IDS system for example, you would simply redirect traffic flows to the attached IDS port. This means your monitoring tools can all be in one place even though you have remote sites.

In short, Big Switch think that the best way for monitor is to build a parallel switch fabric using white box switches to keep the cost manageable, and allow a controller to do the heavy lifting for you on the network, as well as offering some basic capture capability to make your life even simpler. The switch fabric can be created from one switch upwards, so there’s a relatively low cost of entry for this kind of solution, although the cost of taps is likely to be the same in any solution that uses them. In terms of the white box switches, the same switches are supported as for their Big Cloud Fabric but at present the fabric can only either be used as a switch fabric or a monitoring fabric; even though the hardware and software are very similar, they can’t perform the tasks interchangeably.

Limitations on scale are going to be largely based on limitations of the white box switches supported by Big Switch, so from what I can see this places this solution fairly firmly in the 10G realm; if you have higher speeds, I am not sure how well this will scale (though if Big Switch wants to comment and enlighten me, that would be awesome!)

That brings us rather neatly to another parallel monitoring network, offered by Gigamon.

Gigamon

Gigamon offer what most people would probably perceive as a more ‘classical’ monitoring company, offering “tap and aggregation” products. Architecturally, a Gigamon Visibility Fabric monitoring network would probably look very similar to the Big Tap diagram above; with taps feeding into a set of devices dedicated to aggregating monitoring traffic and sending it on to monitoring and capture devices attached to them.

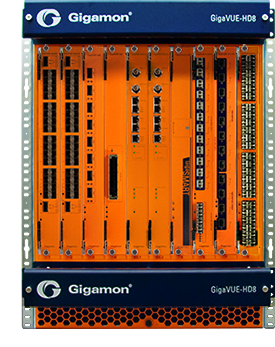

The monitoring devices however, are quite different to the white box switches supported by Big Switch. Gigamon offer aggregation products in a number of shapes and sizes ranging from the 1U Gigavue TA–1, designed for multiple low-utilized 10G links:

… through the Gigavue HD8, a 14U veritable behemoth of a box with a 2.5TB backplane, and supporting 1G, 10G 40G and 100G interfaces.

I have to hand it to Gigamon; this is a seriously big chassis, supporting up to 64 x 40G interfaces, and up to eight chassis can be clustered. On the other hand this is no whitebox switch, and I’ve seen prices in the $50–70k range just for the bare HD8 chassis. The tiny TA1 I believe is in the $12–15k range, so clearly Big Switch have a competitive argument to make at the small to mid-sized end of the monitoring market simply because the cost of a typical whitebox switch (48 x 10G and 4 x 40G/16x10G) is more like in the region of $6k.

Still, the Gigamon solution offers a few neat little trinkets that could have a number of helpful uses. For example:

Deduplication

If you tap a network at multiple points, you’re likely going to see the same packet delivered to your monitoring network as it traverses multiple taps or SPAN ports. This is great if you’re troubleshooting a problem, as you can see the progress of the packet as it moves through the network. It’s less good though if you’re running IDS for example, where you’d simply be running the same checks on the same frames over and over again, which is a waste of resources. Gigamon offers the option to rather neatly remove duplicate frames and only deliver a single copy to the receiving device. This is done by effectively remembering what traffic has been sent through for a definable period of time (by default I believe it was 50ms, but it can be up to 500ms). If a duplicate frame is seen within that window, it will be suppressed. But wait – frames do not look identical when they cross layer 3 boundaries, do they? The source and destination MAC changes, for starters. Similarly, a frame going over an 802.1q trunk link will have a header that looks a little different to that on a non-trunk link. Gigamon have of course thought of that, and you as the user can determine which elements are “allowed” to be different while still considering it to be the same underlying frame. Clever stuff indeed, though at the present time a frame encapsulated in VXLAN (for example) can not be matched with the same unencapsulated frame on the other side of a VTEP.

Netflow Generation

A rather nice feature is the ability for the Gigamon Visibility Fabric to generate Netflow records based on what it sees. At first you might wonder why this is useful given that many routers and switches will do this for you already? But think about it – especially in a heterogeneous network, you may be trying to deal with processing netflow (v5 or v9), j-flow, s-flow and more. In addition, the netflow cache on routers is notoriously small, forcing either extreme aggregation of data, or data sampling techniques to minimize how much data needs to be stored. Gigamon get around this by doing the hard work for you and letting your routers and switches get on with what they do best, and moving frames and packets to their destinations. This alone might be a feature that could persuade some companies that this is a good solution for them.

Configuration seems to be fairly straightforward – choose what you want as your source, filter it if you choose, then use one of the additional actions like netflow generation or reduplication. This is, I think it’s fair to say, a “Cadillac” type solution. Yes, it’s expensive, but they offer a level of scale and smart aggregation that is really up there.

With all the expense of setting up a monitoring network, the question might be asked “Why do I need a separate network anyway?” And that’s where Pluribus come in to the picture.

Pluribus

I talked in a previous post about the Pluribus Server Switch™ – a device matching commodity Ethernet switching silicon with an x86 server, along with Fusion IO providing high speed storage. This combination makes it possible for the switch to capture traffic at fairly high rates.

The fact that Pluribus switches create a distributed managed fabric means it can act just like the Big Tap solution and allow configuration of a capture filter on any one device and see it applied across the entire fabric at the same time. You don’t need to know where to initiate a filtered capture because all nodes will look for matching traffic, and it can be viewed in real time like a tcpdump. Pluribus said previously that even their lowest specification switch can capture 700–800Mbps, and the 2U switches can capture 10Gbps, which is not bad going at all.

While it’s not netflow, the system also caches traffic patterns in a very similar way to netflow and allows you to view traffic from any host, for example, and get a list of everything that IP has contacted including layer3, layer 4 information and bytes passed, and so on. Want to limit it to a particular time window? No problem – Pluribus makes it simple to see the statistics for any time slice you want. That’s kind of cool – it’s like a network video recorder (for want of a better description).

Cost wise, well, you’re buying the switch infrastructure and getting a monitoring solution for free. Except, well, what about IDS for example? How do we send a continuous feed of data to an attached monitoring device? Of that I am not clear. It seems to me that for specific traffic captures and user monitoring, this solution is a great, free tool with some pretty awesome capabilities, especially in terms of the capture rates. For IDS, for example, I think I’d still have to be tapping key links and pushing it through another aggregation solution to feed the IDS.

Conclusions

If you need to monitor your network, the vendors at NFD8 presented us with three quite different solutions. Gigamon offered the largest and most complex (and expensive) option; Big Switch offer a smaller but very capable and cost-effective out of band option; Pluribus offer a way to monitor traffic that adds no cost to the network, but perhaps can’t provide quite the same filtering and aggregation abilities as the other two. None of them is “best” as such, because each, to me, fits is own solution.

Pluribus’ sweet spot is for those who have installed their switches – it’s a free capability that means owners don’t have to go add on additional sniffers and taps and aggregation switches. This increases the value of the Pluribus switching product to customers and adds an incentive for people to invest in their product.

Big Switch makes the most of the low cost of bare metal network switches to provide something akin to Gigamon, but on a much lower cost basis. If you’re going to put in an out of band network (for example, if you’re not running Pluribus switches) then this could be a very manageable solution. In fact, for those who are concerned about running whitebox switches on their production network and prefer sticking with the “name brand” vendors, perhaps Big Tap is a good way to get comfortable with how they can work without having to have them in the production data path. That in turn may lead to more conversions to bare metal networking in the future once confidence is gained. Pluribus’ solution certainly challenges Big Switch because it’s free, but ultimately I don’t believe there is feature parity between the two solutions (and if I’m wrong there, I’ll come back and update this!).

Gigamon is really the only solution if you’re running and tapping 40G or 100G ports and need a solution that can seriously scale for you. At the lower end (e.g. the Gigavue TA1) I think honestly I’d be tempted to try out a single Big Tap switch instead, just because it’s cheaper. But as the line rates go up and the port density increases, it may be that Gigamon has the edge. They’ve also been in the industry somewhat longer than Big Switch and Pluribus, and their feature set and capabilities reflect that. This is decidedly the “gold plated” option of the three presented, and while the price tag leaves you in doubt about that, if you need a comprehensive solution, Gigamon would be it.

Disclosures

I attended Networking Field Day 8 as a delegate at the invitation of Gestalt IT, who run these events. The events are funded by the sponsoring vendors who “buy” time to talk to the delegates, and that money in turn funds my travel, accommodation and food while I am there. I am not paid to attend this event (I take vacation from work and do it on my own time), and I am not obliged to blog, tweet, or otherwise publicize any sponsor of the event. Further, when I do choose to publish content related to the event, I do so at my own discretion, and the content and opinions expressed are mine alone without any interference from any other parties.

Please see my general disclosures page for more information.

What I heard is Big Switch scales better than Gigamon. How can you scale with Gigamon when it is so expensive. Also if you need one de-dup box, Big Tap can work with any NPB out there.

I dont see how you can get real pervasive monitoring solution from Gigamon.

Ok, you’ve confused me.

“How can you scale with Gigamon when it is so expensive”

I do not understand how cost has any relation to scalability beyond what you’re willing to pay. The bottom line is that the Gigamon HD8, for example, with up to 64 x 40G ports on it, scales way beyond Big Switch’s wildest dreams when using the regular Broadcom Trident 2 chipset, no matter how many bare metal switches you purchase. And Big Switch has no 100G ports available.The flip side of that is that if you want lots of 10G ports, Big Switch will undoubtedly come in significantly under anything Gigamon could dream of.

“I dont see how you can get real pervasive monitoring solution from Gigamon.”

On what basis do you make that claim? Or are you conflating cost with pervasiveness now?

Also if you need one de-dup box, Big Tap can work with any NPB out there.

Absolutely; you could choose to deploy a Big Tap fabric feeding data to a network packet broker. I’d argue that Gigamon simply chooses to embed the NPB functionality within their monitoring fabric rather than having it on a stick somewhere else. There may be good and bad to doing so; I suspect at the low bandwidth end of things, it’s an expensive overhead that prices them out of many people’s options. At high bandwidth, it’s probably the only way to reasonably process the data. After all, you don’t want to be piping 100G around the network looking for an NPB to process it because that sure as heck isn’t scalable.

http://www.bigswitch.com/blog/2014/09/15/big-tap-40-an-sdn-replacement-to-proprietary-network-packet-brokers-npbs

– Big Tap supports multiple switches with 40G interfaces. Extensive HCL including Dell.

– Big Tap has features which will help you do pervasive monitoring (like Tap Tracker, Host Tracker, Deep packet matching etc)

– To go pervasive on Big Tap has remote DC monitoring to tap every location.

– Tested Scale of the fabric is 50-80 switches independent of switch throughput.

It is the most high-scaled (based on SDN architecture), feature-rich, low-cost monitoring solution out there.

“It is the most high-scaled (based on SDN architecture), feature-rich, low-cost monitoring solution out there.”

Are you sure you don’t work for the Big Switch marketing department? 😉

I confess that I had not noticed that there is now a 32 x 40G switch on the HCL. Prior to that, they’ve all been nx10G with 2 to 6 x 40G ports on them, using the slower 720Gbps Trident-II. That certainly helps push the Big Tap significantly up in scale, so I appreciate the link there.

I would posit that you can have pervasive monitoring with either Big Switch of Gigamon; the taps are going to cost the same regardless of solution, so it will come down to how many, and what speed, interfaces you need to monitor. Both Big Tap and Gigamon offer remote-site solutions for monitoring while retaining your tools in a central location.

Big Tap without question though wins on cost, and with the 32x40G I’ll grant you that the competition is even fiercer against the 100G-capable Gigamon solution.

>> “Are you sure you don’t work for the Big Switch marketing department? ”

No, I don’t.

LOL — I had on my TODO list to reach to you John about this nice article. Thanks for the overview. I did want to point out that we do have support for 40G ports and our dataplane (and management plane) scaling goes quite a ways past the single even very large boxes that our competitors have, but it looks like someone has beaten me to it — thanks kooljava2.

Fwiw, the customer diagram we showed in the field day is all 40G ports and there are 200+ 40G “filter”/input ports in that deployment. Even before you start talking about “financial” scaling, it would be fairly difficult to build/manage a solution like this from more traditional NPB components. It’s just the different of Big “box-by-box” versus a fabric of switches managed from a central location.

Thanks again for the mention John!

I agree that in particular, the management aspect of things with Big Tap is incredibly simple for the end user. That alone has big value 🙂

I’m less clear, honestly. on how easy it is to manage multiple Gigamon boxes.

And how do packet flow switches from Netscout or NPBs from VSS (who was bought by Netscout) fit into this discussion?